This is not a product review. It is a category story. QuantumLoop EMMA is one product, but the reason it is worth discussing is what it represents: a new class of AI tool that targets the most visible, most emotionally charged operational problem in UK general practice — the telephone.

The question is not just whether EMMA works. It is whether the AI receptionist category itself becomes a durable layer in primary care infrastructure, or whether it turns out to be a transitional response to a specific moment of access pressure.

Why Now?

Three forces are converging to make the AI receptionist category commercially viable in 2026.

The first is persistent phone-access failure. CQC data continue to show that phone access to general practice remains difficult for a large proportion of patients. Barely half of those who tried to reach their GP by phone described the experience as easy. For a service that handles millions of patient contacts per week, that level of friction is both a quality problem and a reputational crisis.

The second is policy pressure. Since October 2025, practices in England have been required to keep online consultation tools available during core hours. NHS England's broader access modernisation programme has pushed practices to rethink how demand enters the system. The environment has shifted from "practices may want to modernise" to "practices are expected to modernise" — and the phone line is one of the most obvious areas where modernisation has lagged.

The third is a growing willingness within general practice to deploy AI in operational, non-clinical tasks. Ambient scribing tools have normalised the idea of AI sitting inside the consultation workflow. The step from "AI writes my notes" to "AI answers my phone" is smaller than it sounds, because both are workflow tools rather than autonomous clinical reasoning systems. Practices that might be wary of AI making diagnostic decisions are much more open to AI handling routine administrative phone calls.

EMMA sits at the intersection of all three pressures. That is what makes it strategically interesting, regardless of how any individual practice's pilot goes.

What QuantumLoop EMMA Is Positioning Itself to Do

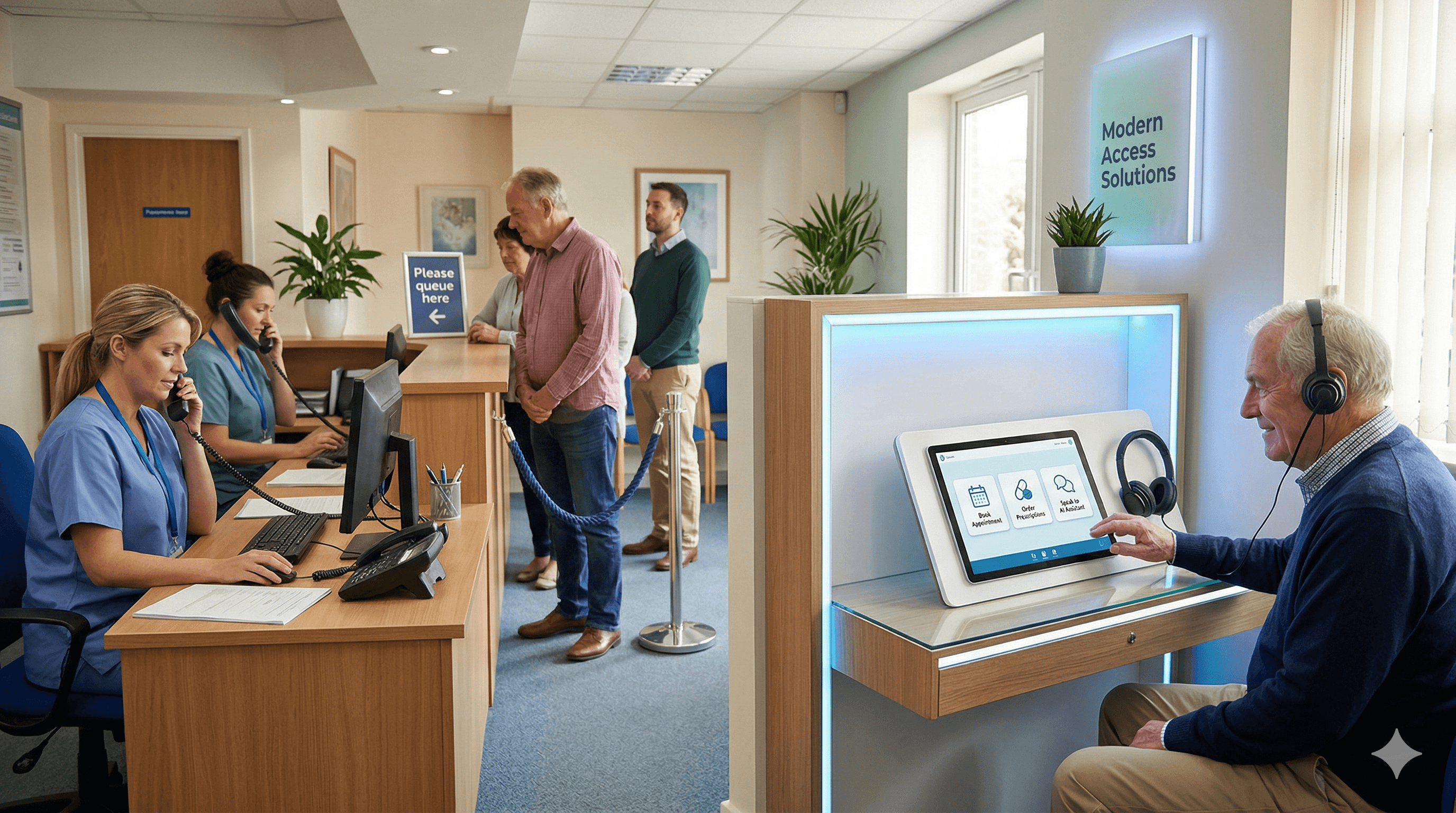

EMMA is marketed as AI reception specifically built for NHS GP surgeries. The vendor's positioning includes several core claims: every call is answered instantly, the system can handle large numbers of concurrent calls, multilingual support is available, integration with existing online consultation tools is offered, and phone queues are eliminated rather than merely shortened.

The branding is deliberate. EMMA is not called a triage tool or a navigation assistant. It is called a receptionist. That naming choice signals an intent to own the patient's first moment of contact — the point at which a caller either gets through or gives up.

Practice-level rollout examples appear on surgery websites, indicating real-world deployment rather than exclusively pilot-stage testing. The vendor also claims DTAC certification, which positions EMMA within the NHS assurance framework for digital health technologies.

Why the AI Receptionist Category Is Strategically Interesting

Beyond any single vendor, the category itself has several properties that make it worth watching.

It targets one of the most visible pain points in general practice. Unlike documentation tools, which primarily affect clinician experience, or clinical decision support, which operates behind the scenes, an AI receptionist directly touches the patient experience at the moment of highest frustration. That makes it politically powerful. If it works, patients notice. If it fails, patients notice even more.

It affects both patient sentiment and staff workload simultaneously. Reception teams in general practice face extraordinary pressure, including high call volumes, emotional demand, and sometimes verbal abuse from frustrated patients. A tool that absorbs the peak-time load has a dual benefit: better patient experience and better working conditions for staff.

It may be easier to justify financially than more abstract productivity tools. Practice managers can point to measurable metrics — abandoned call rates, average queue time, patient complaints about phone access — and tie them directly to the AI receptionist's performance. That makes the business case more concrete than "clinicians feel less burdened by paperwork."

And it is operationally adjacent to total triage and digital navigation, which means the category boundaries will likely blur over time. A tool that starts as a phone-answering system may evolve into a front-door orchestration platform — or may be absorbed into broader practice management systems. The competitive dynamics are still forming.

Where EMMA Appears to Fit Versus Other Tools

Positioning EMMA against the broader landscape helps clarify what it is and what it is not.

Compared with online consultation vendors like Accurx, eConsult, or Anima, EMMA is more voice-first. Online consultation tools primarily serve patients who are comfortable submitting digital forms. EMMA targets the patients who pick up the phone — a group that overlaps with but is not identical to the digital cohort.

Compared with telephony vendors that offer call management, cloud telephony, or queue callback systems, EMMA is more workflow-aware. It does not just manage the queue — it captures intent and routes requests.

Compared with ambient scribing tools like Heidi or TORTUS, EMMA attacks access rather than documentation. These categories solve different problems and are complementary rather than competitive.

Compared with care-navigation assistants like X-on Surgery Assist, EMMA is more explicitly branded as a receptionist. Surgery Assist orchestrates multiple channels; EMMA focuses on the phone call experience. A practice might use both, or might find that one subsumes the other's function over time.

What Claims a Practice Should Test in the Real World

Vendor claims and real-world performance are different things. Before committing to any AI receptionist, including EMMA, a practice should gather evidence on several dimensions.

Call abandonment rates. The most direct metric. If the AI receptionist is working, fewer patients should hang up before their request is captured. Measure this against a baseline from the pre-implementation period.

Escalation accuracy. How well does the system identify and escalate clinically urgent or emotionally distressed callers? This is difficult to test in a demo and essential to test in practice. A small number of missed escalations can have serious consequences.

Patient satisfaction. Not just "did the AI answer?" but "did the patient feel heard, understood, and confident about what happens next?" The GP Patient Survey and practice-level feedback should both be monitored.

Reception staff experience. Are staff genuinely freed for higher-value work, or are they spending time correcting AI outputs, managing exceptions, and dealing with patients who are frustrated by the automated system?

Effect on GP Patient Survey metrics. This is the longer-term measure. If the AI receptionist is improving access, practice-level survey data should reflect it over time.

Handling of vulnerable callers. Older patients, patients with cognitive impairment, patients with anxiety, patients with limited English, patients in crisis. How does the system perform with these groups specifically? Aggregate metrics can mask serious problems at the margins.

Complaints and exceptions. How many interactions result in complaints? What is the nature of those complaints? How quickly and transparently are they resolved?

The Risks and Unresolved Questions

No honest assessment of this category can avoid the risks.

Trust. Some patients will not trust an AI system at their GP surgery, full stop. This is not irrational — it reflects lived experience of systems that create barriers. Managing trust requires clear communication, genuine choice, and visible human fallback.

Perception of being fobbed off. The NHS has a long history of access "solutions" that patients experience as deflection. If an AI receptionist feels like one more scripted layer before real help, it will erode trust rather than build it.

Handling of urgency and ambiguity. General practice presentations are often vague, overlapping, and context-dependent. A patient who says "I feel a bit off" might need reassurance or might need an ambulance. AI systems that are trained on structured intents may struggle with the messy reality of how patients actually describe their concerns.

Equity and communication. Speech impediments, strong accents, hearing impairments, cognitive difficulties, emotional distress — all of these affect how people communicate on the phone. An AI receptionist that works well for clear, calm, articulate callers and poorly for everyone else is not equitable.

Whether faster answering translates into genuinely better access. This is the deepest question. If the AI answers the phone in two seconds but the patient still waits 48 hours for a callback, has access actually improved? Speed of answering is not the same as speed of resolution. The downstream workflow matters at least as much as the front door.

Where Knowledge and Learning Support the Transition

Practices implementing AI receptionists are also managing a significant change process. Clinicians, nurses, and administrative staff all need to understand what the AI does, how to interpret its outputs, and when to override or supplement its decisions.

A platform like iatroX supports this transition in a practical way. Its Ask iatroX feature gives triaging clinicians rapid, guideline-grounded answers when they are processing AI-captured requests and encounter clinical uncertainty. Its Brainstorm tool allows clinicians to reason through ambiguous cases step by step — exactly the kind of thinking that the back end of an AI receptionist workflow demands. And its CPD module lets clinicians log their learning from these new workflows and reflect on how their practice is evolving — turning operational change into professional development.

Conclusion

QuantumLoop EMMA matters because it helps define a new category. It is one of the most explicit attempts to create a branded AI receptionist for NHS general practice, and its emergence reflects real structural pressures that are not going away.

But the real story is not whether EMMA specifically succeeds. It is whether AI reception becomes a durable layer in UK primary care — a standard part of the practice stack, like online consultation tools or cloud telephony — or whether it turns out to be a transitional response to a specific moment of intense access pressure.

The answer depends on whether these tools can move beyond answering speed to genuinely improving the patient journey end to end. The category is promising. The evidence is still forming. And the practices that adopt wisely — with governance, workflow redesign, and honest measurement — will be the ones that determine the outcome.