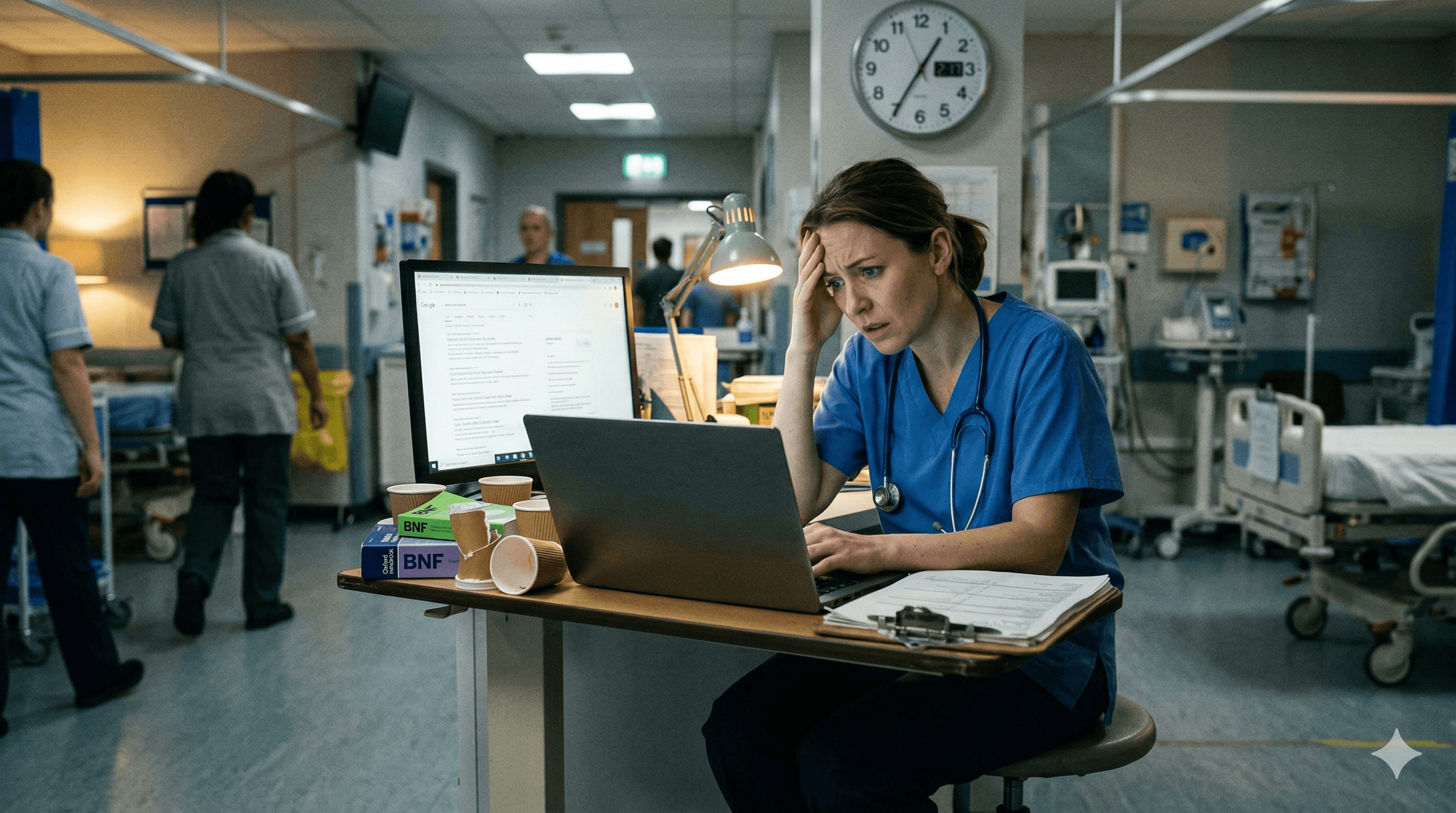

Every clinician does it. Between patients, during a ward round, at 2am on call — you type a clinical question into Google and scan the results for an answer. It is fast, familiar, and usually good enough. Until it is not.

The problem with Google for clinical questions is not that it never returns useful results. It sometimes does. The problem is that it is structurally incapable of reliably returning the right result for the specific question a clinician is asking, in the specific clinical context they need it for. And in clinical practice, "usually good enough" is not a safe standard.

What Google Actually Returns for Clinical Queries

When a UK GP types "hypertension management NICE" into Google, the results page typically contains a mixture of sources with very different authority levels, competing for the same screen space.

Patient-facing NHS.uk content. NHS.uk is designed for patients, not clinicians. The information is accurate but simplified, lacks the clinical detail needed for prescribing decisions, and does not include the nuanced recommendations that NICE guidelines contain.

NICE guideline pages. Sometimes the right NICE page appears. But Google may surface the overview page rather than the specific recommendation you need, or an older version of the guidance that has been superseded. NICE has restructured its website multiple times, and Google's index does not always reflect the current architecture.

CKS pages. CKS is the most clinically useful result for a primary care clinician. But CKS and the main NICE website are indexed separately, and Google does not always prioritise CKS for clinical queries, particularly when the query does not include "CKS" as a keyword.

Third-party summaries. Medical education websites, pharmaceutical company resources, and health journalism sites are heavily SEO-optimised. They often outrank authoritative sources because they invest more in search engine optimisation. Their content may be accurate, but it may also be outdated, US-centric, commercially influenced, or oversimplified.

Patient forums and Q&A sites. For some queries, Google returns patient-written content from forums, health Q&A platforms, or social media. These have no clinical authority whatsoever.

Advertisements. Pharmaceutical companies and medical education companies bid on clinical keywords. The first result for a clinical query may be a sponsored link to a commercial product.

The clinician scanning these results in 20 seconds between patients must rapidly assess which source is authoritative, current, UK-specific, and clinically appropriate. This requires more cognitive effort than most people recognise — and in a time-pressured clinical environment, the temptation to click the first plausible-looking result is strong.

The Specific Problems

No provenance guarantee

Google ranks results by relevance signals, not by clinical authority. A well-SEO-optimised blog post can outrank a NICE guideline for the same query. Google does not distinguish between a peer-reviewed guideline and a content-marketing article.

No jurisdictional filtering

If you are a UK clinician, you need UK guidance. Google does not reliably filter by jurisdiction. A search for "management of atrial fibrillation" may return results based on AHA/ACC guidelines (US), ESC guidelines (European), or NICE guidelines (UK), without clearly indicating which is which. For a clinician who needs the NICE recommendation, a US-centric result is not just unhelpful — it may recommend a different treatment pathway.

Outdated results

Guidelines change. Google's cache does not always reflect the most current version. A CKS page that was updated last month may be outranked by a cached version of an older page, a third-party summary based on the previous guideline edition, or a PDF from a trust that has not updated its local policy.

SEO contamination

The medical information space is heavily commercialised. Pharmaceutical companies, private healthcare providers, supplement manufacturers, and medical education companies all invest in SEO to rank for clinical keywords. Their content is designed to attract clicks, not to provide the definitive clinical recommendation. The clinician who clicks the top result without checking the source may be reading commercially influenced content rather than national guidance.

No clinical context

Google returns documents. It does not answer questions. If you type "what is the first-line treatment for newly diagnosed hypertension in a 55-year-old with no comorbidities?", Google does not return a direct answer. It returns a list of pages that might contain the answer, and you have to find it within those pages. This is structurally different from asking a clinical question and receiving a direct, cited response.

What Clinicians Actually Need

The clinical workflow requires a tool that meets five criteria simultaneously.

Speed. An answer in 15 seconds, not 2 minutes of scrolling and clicking.

Authority. Grounded in the national guidelines that govern the clinician's practice.

Currency. Reflecting the most current version of the guidance.

Specificity. Answering the specific clinical question, not returning a generic page.

Provenance. Showing where the answer came from, with a verifiable link to the primary source.

Google meets none of these criteria reliably. It is fast, but the speed is undermined by the cognitive effort of filtering results. It is not authority-aware. It is not always current. It does not answer specific questions. And it does not show provenance in a clinically useful way.

What to Use Instead

Ask iatroX is designed to meet all five criteria. You type a clinical question in natural language. The tool retrieves relevant information from a curated corpus of NICE, CKS, SIGN, BNF guidelines, and peer-reviewed research using retrieval-augmented generation. It returns a direct answer with visible citations linking to the primary source. Time to answer: seconds.

The architectural difference is fundamental. Google is a search engine that returns documents. iatroX is a clinical AI that answers questions from verified sources. One requires you to do the interpretation work. The other does the retrieval and synthesis, then shows you the source so you can verify.

The Knowledge Centre provides a complementary approach: structured, condition-by-condition navigation of UK national guidance, with direct links to NICE, CKS, SIGN, and BNF content. This is the "I want to browse" route, versus Ask iatroX's "I have a specific question" route.

NICE CKS directly remains an excellent resource when you know exactly which topic you need. Bookmark your most-used CKS topics for direct access.

The BNF app is essential for prescribing queries specifically. No AI tool should replace direct BNF verification for drug dosages and interactions.

The practical recommendation: stop Googling clinical questions. Use iatroX for rapid guideline-grounded answers. Use CKS for detailed point-of-care summaries. Use the BNF for prescribing. And use Google for what it is actually good at in a clinical context: finding non-clinical information like conference dates, journal access, and practice logistics.

Conclusion

Google is the world's best general-purpose search engine. It is not a clinical decision support tool. The gap between these two things is where patient safety risks live.

Every time a clinician Googles a clinical question and acts on a result without verifying its authority, currency, and jurisdiction, they are accepting a level of risk that purpose-built tools have been specifically designed to eliminate.

iatroX is free, UK-specific, guideline-grounded, and designed for the exact workflow that clinicians use Google for — but with the authority, provenance, and speed that Google cannot provide. The switch is not difficult. It is just a different URL, a different habit, and a fundamentally safer foundation for clinical decisions.