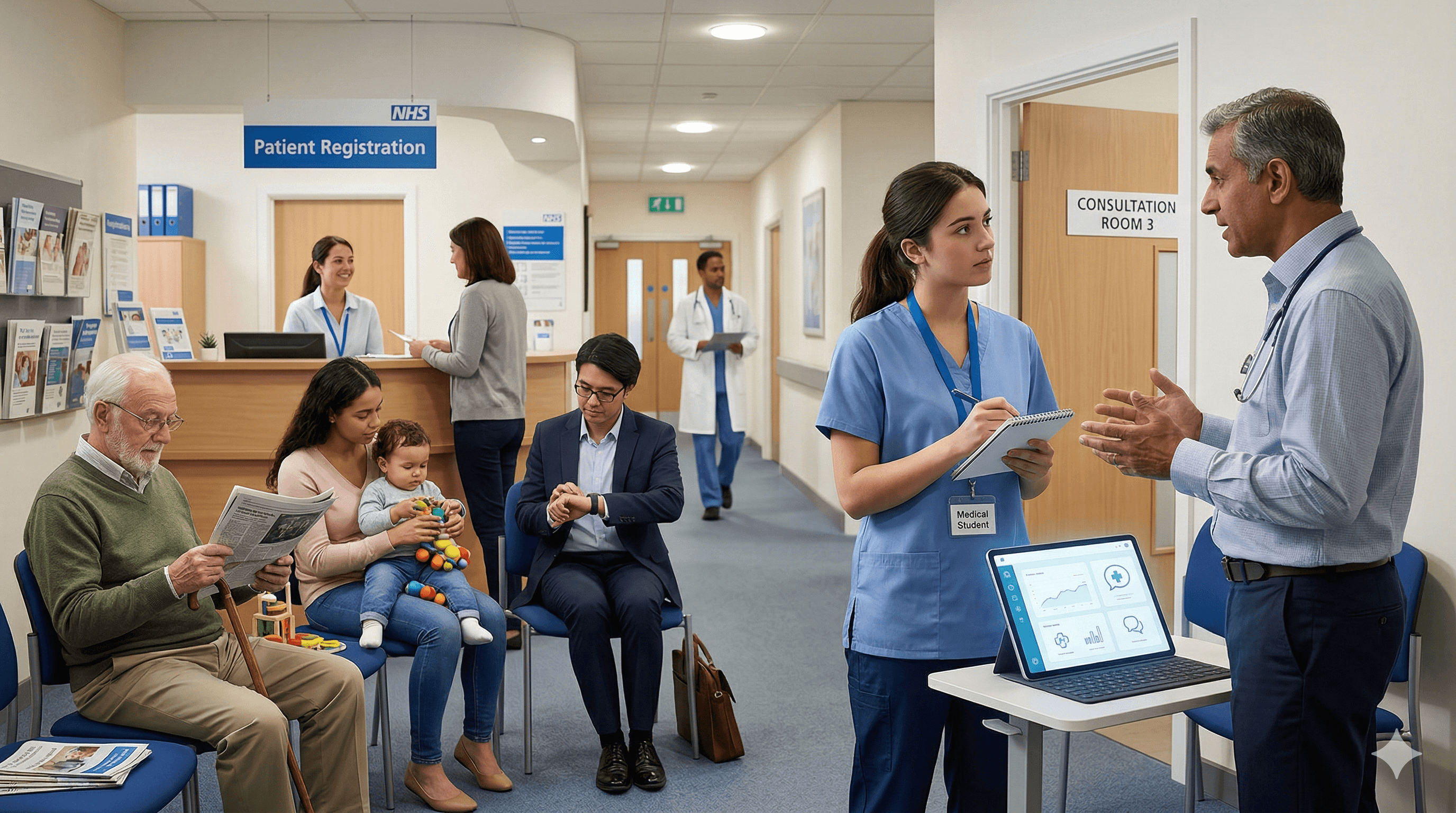

GP placement is unlike any other clinical rotation. You see undifferentiated symptoms for the first time — patients who walk in with vague complaints that could be anything from anxiety to cancer. You learn that most of medicine happens outside hospitals, that communication matters as much as knowledge, and that uncertainty is not a failure of competence but a feature of the discipline.

AI tools are now part of the landscape for medical students. Healthcare education bodies, including the Medical Schools Council, are actively encouraging medical schools to teach AI literacy, data governance, digital ethics, and responsible use. The GMC expects that professional standards — including responsibility for decisions — apply fully when AI tools are used.

The question for students on GP placement is not whether to use AI. It is how to use it in a way that deepens learning rather than hollowing it out. Done well, AI sharpens preparation, consolidates understanding, and turns clinical uncertainty into focused revision. Done badly, it replaces the thinking that placement exists to develop.

What GP Placement Is Actually For

This matters, because the purpose of placement determines what counts as good or bad AI use.

GP placement teaches you to see undifferentiated symptoms. Hospital rotations often present you with patients who have already been filtered through emergency departments and referral pathways. In general practice, the patient arrives with a story, and it is your job to work out what the story means. That cognitive work — generating differentials from unstructured information — is the core skill of primary care.

It teaches you primary-care probability. The rash that would be alarming in a hospital corridor is usually benign in a GP waiting room. Learning which presentations are common, which are rare, and which demand action even when they are unlikely is a calibration exercise that takes time and exposure.

It teaches you communication and rapport. The GP consultation is a dialogue, not a procedure. Learning how to build trust, explore the patient's ideas, concerns, and expectations, and deliver explanations that make sense to non-medical people is a skill that no AI tool can replicate — and that no amount of reading about it can substitute for doing it.

It teaches you safety-netting. This is the art of telling a patient what to watch for and when to come back. It requires clinical judgement, communication skill, and the ability to hold uncertainty without either dismissing it or catastrophising it.

It teaches you the administrative reality of general practice — coding, referrals, prescriptions, letters, results handling, and the constant juggling of competing priorities. Understanding this operational layer is part of understanding the discipline.

AI can support many of these learning goals. But it cannot provide them. The learning comes from the clinical encounter, the human interaction, and the reflective processing afterwards. AI is a tool for consolidation, not a substitute for experience.

Good Uses of AI on GP Placement

Before Clinic

Use AI to revise common GP presentations. If you know you are sitting in on a chronic disease management clinic, spend 20 minutes the night before reviewing the conditions you are likely to see. iatroX's Ask feature is useful here — you can ask a specific question about NICE-recommended management for type 2 diabetes, for example, and receive a citation-first answer grounded in UK guidelines rather than a generic internet summary.

Practise differential frameworks. If you find yourself weak on musculoskeletal presentations or paediatric rashes, use AI to generate structured history prompts or differential checklists that you can test yourself against.

Review guideline-based management pathways so that when your supervisor discusses a case, you can follow the reasoning rather than hearing it for the first time.

After Clinic

This is where AI is most valuable on placement.

Consolidate learning from the conditions you encountered. You saw a patient with vertigo — now use AI to review the differential, the red flags, and the management. The clinical encounter primes your memory; the post-clinic review locks it in.

Turn uncertainty into focused revision questions. If you were unsure during a consultation whether a symptom pattern warranted urgent investigation, that uncertainty is a gift — it tells you exactly what to study. Use iatroX to clarify the guideline pathway and resolve the gap.

Compare your impression with guidelines. If you thought the patient needed a referral and your supervisor managed them in primary care, explore why. What does the guidance say? What threshold was your supervisor using? This comparison between your instinct and expert practice is where real learning happens.

Summarise learning points for your logbook or reflective notes. AI can help you structure your reflections — but the reflection itself must be genuine. Use AI as a scaffold, not a ghostwriter.

Skills Practice

OSCE-style rehearsal is one of the most productive uses of AI for medical students. Tools like Geeky Medics' AI patients allow you to practise structured consultations, and platforms like AMBOSS AI Mode offer question-linked clarification that helps you connect clinical scenarios to learning material.

iatroX's Q-Bank uses spaced repetition and active recall — the two most evidence-based methods for building durable knowledge. If you are seeing common GP presentations on placement, running related quiz questions in the evening reinforces what you encountered during the day. The adaptive algorithm targets your weak areas, so your revision is focused rather than random.

Bad Uses of AI on GP Placement

Using AI to generate answers you present as your own. If your supervisor asks what you think the diagnosis is and you repeat what an AI told you, you have not learned anything — and you have been dishonest about your reasoning process.

Entering identifiable patient information into AI tools without appropriate governance and permission. Most consumer AI tools are not designed for clinical data. If your medical school or placement practice has not approved a specific tool for use with patient information, assume it is not permitted.

Using AI output as if it were a definitive management plan. AI tools provide information. Clinical decisions are made by clinicians — and on placement, by your supervisor. An AI-generated management suggestion is not a plan; it is a reference point that needs clinical judgement.

Bypassing the educational value of uncertainty. If you encounter a case that confuses you and your first instinct is to ask an AI for the answer before you have tried to work it out yourself, you are robbing yourself of the cognitive struggle that builds clinical reasoning. The discomfort of not knowing is the engine of learning.

Relying on AI to tell you what to think before you have attempted your own assessment. The sequence matters enormously. Think first, then check. Not the other way around.

A Safe Workflow for Students

This is perhaps the most practical section of this article. If you remember nothing else, remember this sequence.

Step 1: See the patient or case. Pay attention. Take notes. Observe your supervisor's reasoning.

Step 2: Think first yourself. Before opening any tool, write down your provisional differential. What do you think is going on? What are you uncertain about? What would you do next?

Step 3: Write your provisional differential and plan. Even if it is incomplete. Even if it is wrong. The act of committing to a position is what creates the learning opportunity.

Step 4: Check against trusted resources. Use guideline-based tools like iatroX or your medical school's recommended references to verify, correct, and expand your understanding.

Step 5: Use AI to clarify gaps, not to replace your first-pass reasoning. If you were wrong about something, understand why. If you were right, understand the evidence that supports you. Use the AI to fill in what you did not know — not to do the thinking you should have done.

Step 6: Discuss with your supervisor. Share what you thought, what you learned, and where you are still uncertain. This conversation is the most valuable part of the learning cycle.

Step 7: Document your learning. Use your logbook, portfolio, or reflection tool. iatroX's CPD module can help structure this — it maps learning activities to professional domains and supports AI-assisted reflection — but the content of the reflection must be genuinely yours.

The GMC is clear that professionals remain responsible for decisions and that professional standards continue to apply when using AI tools. For students, this translates into a simple principle: use AI to support your learning, not to simulate competence you have not yet developed.

What Students Should Be Especially Careful About in GP

Certain areas of general practice require particular caution when it comes to AI use.

Vague symptoms. GP is full of presentations where the answer is not clear. Tiredness, dizziness, "just not feeling right." AI tools may offer neat differentials, but the real learning is in sitting with the ambiguity and learning how experienced GPs navigate it.

Mental health. Patients disclosing psychological distress deserve full human attention. AI tools should not be consulted during or as a substitute for genuine engagement with a patient's mental health presentation.

Safeguarding. Safeguarding decisions — identifying and acting on concerns about abuse, neglect, or vulnerability — require contextual judgement, professional responsibility, and institutional knowledge that AI cannot provide. Never rely on AI output for safeguarding decisions.

Prescribing. AI tools may provide drug information, but prescribing decisions on placement are supervised decisions. Do not use AI-generated prescribing suggestions as if they are authoritative.

Urgent deterioration. If a patient is acutely unwell, the priority is clinical action, not AI consultation. Time spent querying an AI tool is time taken away from assessment and escalation.

Social context. Many GP presentations are inseparable from the patient's social circumstances — housing, employment, relationships, caring responsibilities. AI tools that focus on biomedical pattern matching may miss the factors that matter most in a primary-care consultation.

Which Tools Are Useful for What

OSCE and communication rehearsal: Geeky Medics AI patients provide structured clinical scenario practice with feedback.

Structured study clarification: AMBOSS offers AI-powered question-linked learning that connects clinical queries to educational content.

Question-bank reinforcement: Quesmed and Passmedicine remain useful for exam-style knowledge testing and structured revision.

Guideline-first clinical learning: iatroX provides citation-first answers grounded in NICE, CKS, SIGN, and BNF guidelines, an adaptive Q-Bank built on spaced repetition, a Brainstorm tool for working through clinical scenarios step by step, and a CPD module for structured reflection. It is free, UKCA-marked, and designed for UK clinical practice — making it a natural companion for GP placement learning.

Conclusion

The best use of AI on GP placement is to deepen your learning after clinical contact, not to replace the human and clinical learning that placement exists to provide.

Think first. Then check. Then reflect. That sequence — applied consistently across your placement — will make you a better clinician than any amount of AI-generated information consumed passively.

GP placement is one of the most formative experiences in medical education. Protect it by using AI as a tool for consolidation, clarification, and self-assessment — not as a shortcut past the hard, valuable, irreplaceable work of learning to think for yourself in the face of uncertainty.