There is a familiar clinical moment that happens before any guideline page is opened.

You do not yet know which bucket the problem belongs in.

You do not yet know which management pathway applies.

You do not yet know whether the symptom cluster is ordinary, atypical, or quietly dangerous.

You only know that something feels broad, incomplete, and not ready for neat categorisation.

That is the moment this article is about.

A great deal of clinical AI commentary still compares everything through the same lens: medical search. But that lens can be too blunt to be useful. Some tools are built for evidence-backed confirmation once the problem is already roughly defined. Others are built for an earlier stage of thinking: broadening the frame, avoiding premature closure, generating alternative hypotheses, and suggesting the next diagnostic questions.

That distinction matters because it changes what a tool is actually for.

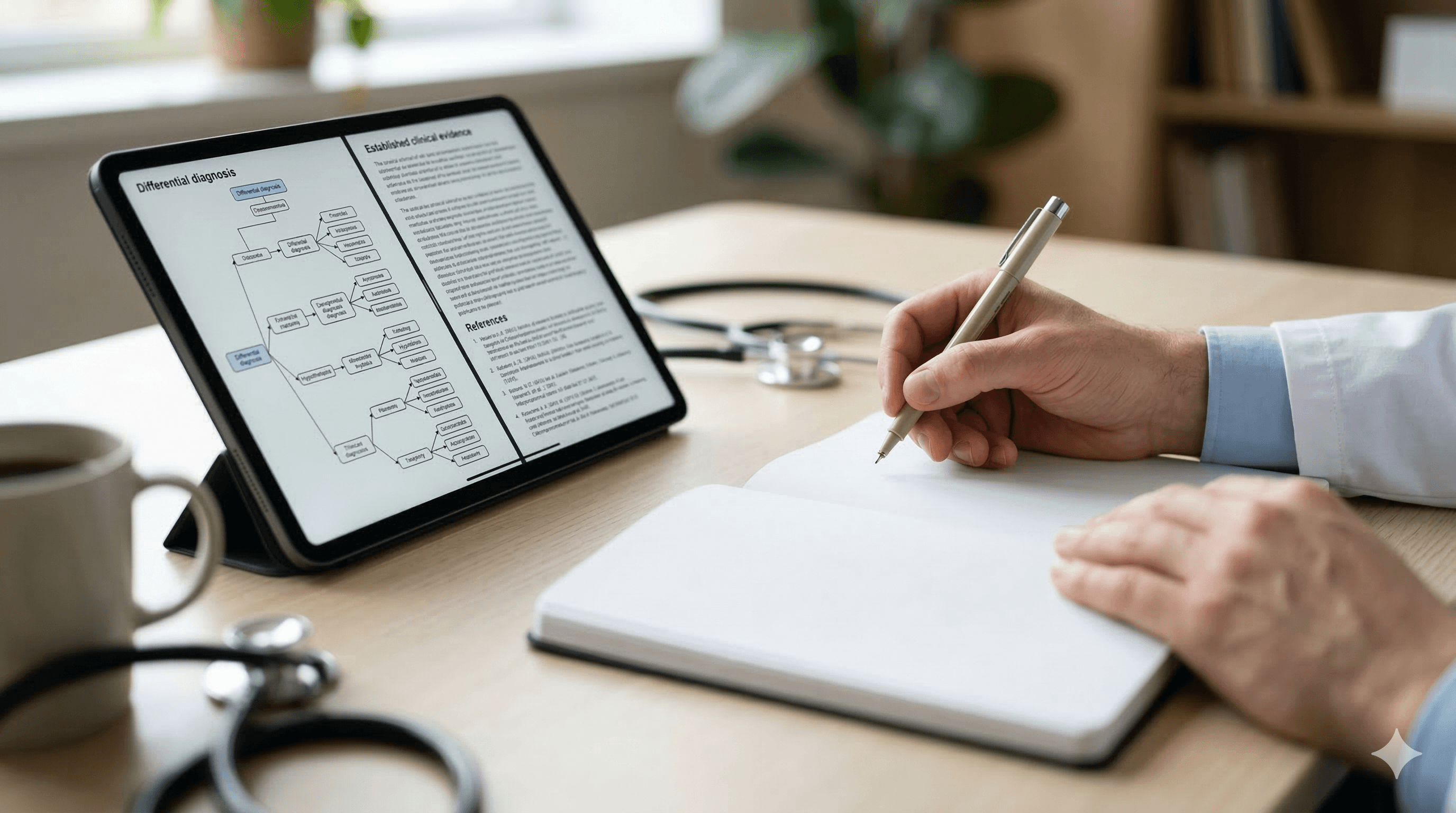

DxGPT is not best understood as another UpToDate-style resource with a different interface. It is better understood as a tool for the diagnostic blank-page problem: the first 90 seconds of uncertainty, before the clinician has enough confidence in the frame to know which definitive source they should even be consulting.

The mistake people make when comparing clinical AI tools

The most common mistake in this category is treating all clinical AI as though it solves the same job.

It does not.

When clinicians compare a differential-diagnosis tool against an evidence-led reference tool as though both are trying to do identical work, disappointment is almost inevitable. One product is judged unfairly for not offering management-grade certainty. The other is judged unfairly for not broadening the frame when the diagnosis is still foggy.

The problem is not necessarily that either tool is weak. The problem is that the comparison starts from the wrong question.

A better question is: At what phase of thinking is this tool meant to help?

If you ask that question first, category boundaries become much clearer.

What UpToDate-style tools are optimised for

UpToDate-style tools are strongest when the problem is already narrow enough to support targeted retrieval.

That usually means:

- you broadly know what you are dealing with

- you want confirmation or management detail

- you need treatment nuance

- you want source-backed recommendations

- you need point-of-care clarity rather than diagnostic ideation

- you are checking pathway, medication, or monitoring detail

This is an important phase of work, and it is often the higher-stakes one. Once the likely problem is identified, clinicians need tools that are good at:

- management structure

- evidence-backed recommendations

- treatment pathways

- dosing and monitoring detail

- confirmation of what current practice expects

In other words, UpToDate-style tools are usually strongest after the problem has acquired a usable shape.

They are not built primarily for diagnostic blank-page work. They are built for evidence-based decision support once the frame is already more defined.

That is why comparing DxGPT to an UpToDate-style tool as though both are aiming for the same destination can distort the assessment from the start.

What DxGPT appears optimised for

DxGPT’s public positioning points in a different direction.

Its centre of gravity is not polished guideline retrieval after the case has been tightly framed. It is earlier. The product is positioned around natural-language symptom descriptions, structured diagnostic suggestions, interactive follow-up questions, disease information, and iterative refinement. That is a very specific workflow shape.

It suggests that the tool is built for a clinician who is still asking:

- what am I really looking at?

- what else should I be considering?

- what questions discriminate between these possibilities?

- what am I at risk of missing because I have anchored too early?

That makes DxGPT less like a classic evidence destination and more like a diagnostic-ideation layer.

This is why its strongest use case is not “I know the diagnosis and want management nuance.” Its strongest use case is closer to “I do not yet trust the frame, and I want a structured way to widen it.”

The first 90 seconds of uncertainty

This is the most useful way to think about the category.

There is an early phase in clinical reasoning that happens before medical search becomes the dominant task.

Something feels off.

The presentation is broad.

The pattern has not resolved cleanly.

You are trying not to narrow too early.

You need alternative hypotheses or better questions before you need a definitive recommendation.

That window may be very brief, but it matters.

Call it the first 90 seconds of uncertainty.

This is the phase where the main job is not yet:

- confirm the guideline

- check the treatment sequence

- refine the dosage

- read the evidence summary

The job is:

- orient

- broaden

- discriminate

- avoid premature closure

- turn vague concern into better diagnostic structure

That is where a tool like DxGPT fits most naturally.

Seen through that lens, it is not really competing head-on with UpToDate-style tools. It is competing for a much earlier slice of the workflow: the moment before the clinician has a stable enough frame to know what evidence page or management topic should be opened next.

That is a very different competitive arena.

Where DxGPT helps most

The strongest value of a DDx-oriented tool tends to appear when the problem is still diagnostically loose.

That often includes:

Unusual symptom clusters

When the presentation does not sit neatly inside a familiar pattern, a differential-broadening tool can help prevent narrow early framing.

Complex or rare disease suspicion

This is one reason DxGPT gets attention. Rare or multisystem presentations create exactly the kind of uncertainty window where broadening the hypothesis space can be useful.

Atypical cases

When the case feels like a familiar diagnosis but with enough wrongness to make you uneasy, a DDx tool can help surface alternatives or remind you what else deserves consideration.

Hypothesis expansion

This is perhaps the cleanest framing. A DDx tool helps widen the field. It does not end the reasoning process. It changes its shape.

Educational reflection

DDx tools can also be useful after the fact. Not only in real-time reasoning, but in asking: what else should I have considered, and what questions would have made the frame cleaner earlier?

That makes the category relevant not only to diagnosis, but to learning.

Where evidence-led tools still matter more

Once the likely problem is identified, the centre of gravity shifts.

At that point, evidence-led tools matter more for:

- management after the likely diagnosis is identified

- checking source-backed recommendations

- confirming treatment nuance

- medication decisions

- pathway details

- monitoring plans

- discharge or follow-up logic

- risk-benefit decisions where evidence and accepted practice matter more than ideation

This is a crucial distinction.

A clinician expecting guideline-level certainty from a DDx tool may be disappointed for the wrong reason.

A clinician expecting a broad, hypothesis-expanding response from an evidence engine may be disappointed for the wrong reason too.

The tools are solving different phases of thinking.

That is why the correct question is not “Which tool is better?”

It is “Which phase of reasoning am I in?”

Why this category distinction matters

This is not just an academic distinction. It changes tool choice, trust, and everyday behaviour.

If clinicians treat all clinical AI as generic medical search, they risk several errors at once:

- picking the wrong tool for the task

- expecting the wrong type of output

- over-trusting one tool because it is strong in a different phase

- underusing another tool because its real value appears earlier in the workflow

Getting the category right reduces disappointment.

It also improves safety. A DDx tool used as though it were a treatment authority is being misused. An evidence engine used as though it were a blank-page diagnostic ideation tool may be less helpful than expected in the moment of broad uncertainty.

This is one reason the “all clinical AI tools are converging into one giant assistant” narrative is often too simplistic. The workflows are still meaningfully different, even if some products now span more than one.

Where iatroX fits in this split

iatroX fits most credibly in this conversation when it is not forced into the wrong category.

It is not best framed as a pure DDx generator.

It is not best framed as only a generic evidence-search engine either.

A stronger and more believable framing is that iatroX sits closer to clinician workflow, knowledge reinforcement, and structured clarification.

That means it becomes especially useful where the clinician wants:

- practical knowledge reinforcement

- structured explanation rather than only raw hypothesis generation

- movement between question-bank logic and clinical reasoning logic

- a layer that helps connect uncertainty to clearer understanding

If DxGPT helps broaden the field when the case still feels blank, iatroX fits best as the layer that helps the clinician understand, orient, and learn through that uncertainty in a more structured way.

That is why the most relevant internal routes here are:

The key point is not that one tool replaces the others. It is that the stack makes more sense when each layer has a clear job.

A practical way to use the split

A useful mental model is this:

If the problem is still broad

Use a DDx-style tool to widen the frame, generate alternatives, and prompt discriminating questions.

If the likely diagnosis is forming

Use a structured clinical knowledge layer to clarify, orient, and reinforce your understanding of what actually matters.

If the likely problem is identified

Use an evidence-led or reference tool to check management, recommendations, medications, and pathway detail.

If you want to retain what you learned

Use a learning or reinforcement layer so that the same uncertainty becomes educational rather than disposable.

That is a much more intelligent way to think about clinical AI than looking for one universal winner.

Conclusion

DxGPT is not best understood as another medical search engine.

It is better understood as a tool for the diagnostic blank-page problem.

Its centre of gravity is not polished guideline retrieval after the case is well defined. It is the earlier moment: vague presentation, incomplete framing, need for broader hypotheses, better next questions, and structured diagnostic prompts before the evidence-confirmation phase begins.

That is why comparing DxGPT directly to UpToDate-style tools can miss the point. They are not starting from the same assumption about where the clinician is in the reasoning process.

The right tool depends on the phase of thinking:

- broadening

- checking

- managing

- retaining

And that is the most useful way to understand the category.

Use the right tool for the phase of uncertainty you are actually in.