Most listicles about “the best AI tools for residents” make the same basic mistake: they compare products as though one tool should do everything.

That is not how internship feels in real life.

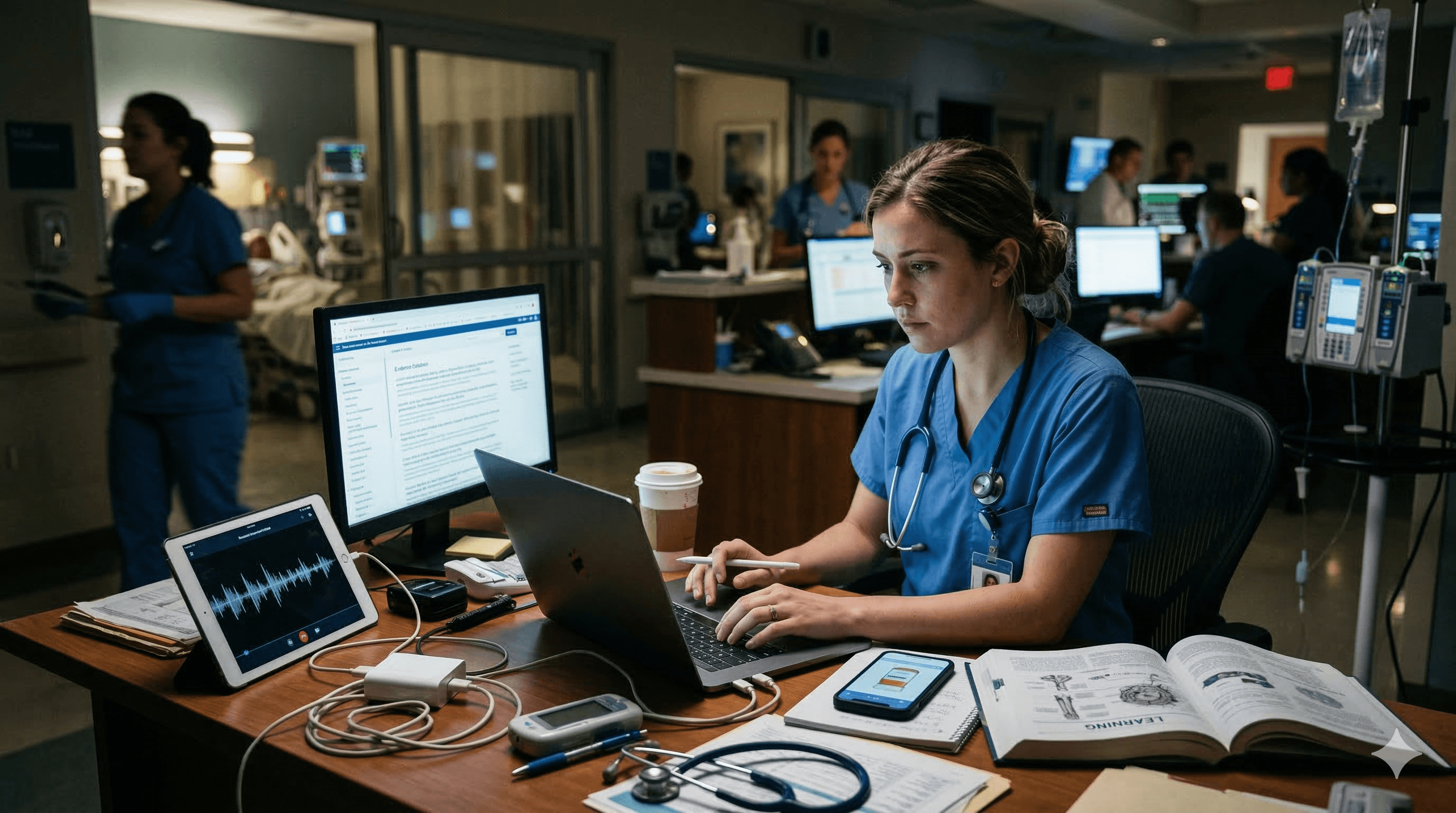

A new resident does not have one problem. They have several at once: documentation drag, partial information, endless interruptions, quick evidence checks, vague differentials, medication-risk decisions, and the uncomfortable fact that learning does not stop just because finals and Match are over.

That is why the better frame is not “which single AI tool wins?” It is: what is the right AI stack for the jobs a new resident actually has?

The most useful setup is rarely one giant dependency. It is a practical stack:

- one layer for documentation support

- one layer for evidence lookup

- one layer for broadening the differential when a case is still fuzzy

- one layer for medication safety

- one layer for retention and longer-term learning

That is also why new residents should think in systems rather than in products. The point is not to collect more subscriptions. The point is to reduce friction without outsourcing judgment.

What changes when you become a resident

The move from student to resident changes almost everything about how information feels.

You have less time.

You get interrupted more.

You see more partial information.

You carry more responsibility, even under supervision.

You document more.

You are more tired.

And the difference between “I understand this concept” and “I can act safely on it under pressure” starts to matter much more.

This is one reason residents often feel disappointed by tools that looked brilliant during revision. A product that was excellent for explanation may not be the one you want when you need a rapid, usable answer during cross-cover. Equally, a tool that feels magical for quick answers may do very little for long-term retention.

That distinction is the whole point of a stack.

It is also why broader workflow thinking matters more than brand loyalty. If you want the wider category view first, the natural internal bridge is What AI tool should a doctor actually use?.

Job 1 — documentation support

This is the most immediately tangible AI use case for many new residents.

Documentation support is about reducing note burden, after-visit summaries, inbox-style admin, letters, routine drafting, and the low-grade friction that turns a normal day into a late evening. This category includes ambient scribes and broader workflow assistants rather than evidence engines.

The core value here is simple: reclaim attention and time.

That is why documentation tools should be judged primarily on:

- whether the notes are actually usable

- how much editing burden remains

- whether the output fits the service and specialty

- whether the workflow is approved and integrated in your environment

- whether the tool saves time in reality rather than only in demos

For U.S.-facing examples, Abridge and Ambience are useful anchors because both publicly position themselves around ambient documentation, with Abridge leaning heavily into contextually aware and billable notes, and Ambience leaning into documentation and coding support. Those are powerful propositions, but they solve a different problem from evidence or reasoning tools. :contentReference[oaicite:1]{index=1}

For residents, the practical conclusion is straightforward: a documentation layer can be valuable, but it is not your evidence layer, your DDx layer, or your revision layer.

Job 2 — evidence lookup

Evidence lookup is a different job entirely.

This layer matters when you already roughly know what the problem is, but you want a fast, structured answer, a quick sense-check, or a source-aware route back to trusted material. It is less about “generate my note” and more about “orient me quickly and show me why”.

This is where tools like OpenEvidence and AMBOSS AI Mode become more relevant than ambient documentation products.

OpenEvidence is strongest when the user wants rapid evidence triage and broad medical search. AMBOSS AI Mode is strongest when the user wants structured, curated clarification with more explicit inline citations and a knowledge-base feel. Those are overlapping but not identical use cases. OpenEvidence publicly positions itself as an AI copilot for doctors with major content partnerships, while AMBOSS AI Mode positions itself around curated sources, inline citations, and source traceability. :contentReference[oaicite:2]{index=2}

For new residents, the question is usually not “which one is smartest?” It is:

- which one helps me orient quickly?

- which one makes source-checking easier?

- which one fits the way I actually work?

If you want the broader evolution of that AMBOSS model, see AMBOSS is no longer just a q-bank: what that means for doctors in 2026.

Job 3 — differential broadening

This is the layer many residents do not realise they need until they are tired, anchoring too early, or staring at a vague presentation that does not yet have a clean shape.

Differential-diagnosis tools matter most when:

- the case is still fuzzy

- premature closure is a risk

- you want to force yourself to consider alternatives

- you need more discriminating questions

- you want a more structured starting list before narrowing down

This is where DxGPT is relevant.

Publicly, DxGPT positions itself as a free diagnostic decision-support tool. It offers structured diagnostic suggestions, follow-up questions, multilingual support, and a developer layer for integration into EHRs, apps, or patient portals. It also explicitly says it should be used as decision support rather than as a replacement for professional evaluation. :contentReference[oaicite:3]{index=3}

That makes it useful not as “the answer”, but as a cognitive forcing function.

A DDx layer is most valuable when it helps you avoid narrowing too early. It is least valuable when you treat it as a diagnostic oracle. The right mental model is: broadening tool, not decision authority.

This is also the natural bridge to your broader reasoning content. The relevant internal routes are:

Job 4 — prescribing and medication safety

This is the job where residents should be most cautious about relying on a general AI layer without verification.

Medication decisions are high-stakes, context-dependent, and often easy to get subtly wrong. Dosing, interactions, contraindications, renal adjustment, route, frequency, and local formulary or policy details all matter. That makes prescribing a category where dedicated drug references remain disproportionately important.

In practice, this means your medication-safety layer should usually be a purpose-built drug reference rather than a general “doctor AI” tool.

Epocrates continues to position itself around drug information, interactions, dosing, and mobile/offline use. UpToDate Lexidrug similarly positions itself around detailed drug, interaction, and therapeutic information via mobile access. :contentReference[oaicite:4]{index=4}

The practical rule for residents is simple:

Use AI to orient. Use a dedicated drug reference to verify.

That does not mean general AI is useless in prescribing-related questions. It means it should not be your final stop for medication decisions.

Job 5 — revision and long-term retention

Residents still need revision.

That often surprises people. Once real work starts, it is easy to assume formal learning becomes secondary. In reality, the cadence changes, but the need does not disappear. New residents still need:

- retrieval

- weak-area repair

- pattern reinforcement

- spaced review

- better linkage between live clinical confusion and later understanding

This is where the stack needs a genuine learning layer rather than only answer generation.

AMBOSS is one obvious example because it now publicly spans both clinical-care AI and learning-oriented AI workflows, which makes it unusually relevant to doctors whose work and learning still overlap heavily. :contentReference[oaicite:5]{index=5}

This is also where iatroX fits most naturally.

Rather than acting as a pure documentation tool or a pure evidence-search engine, iatroX is strongest where the user wants:

- clinician education

- practical knowledge reinforcement

- movement between question-bank logic and clinical reasoning logic

- structured, explainable learning rather than pure answer generation

That is particularly relevant for new residents because internship is full of half-understood topics that do not need another passive paragraph so much as a clear explanation layer that remains educational.

The cleanest internal links here are:

The ideal stack for different types of resident

There is no universal resident stack, because not every resident has the same friction points.

Busy prelim or transitional year resident

This person usually needs:

- a lightweight documentation helper if permitted in their setting

- one quick evidence layer

- one dedicated drug-checking tool

- one low-friction learning layer for maintenance

The goal here is not maximal sophistication. It is to reduce cognitive drag quickly.

Medicine intern

This resident often benefits from the fullest stack:

- documentation support

- evidence lookup

- DDx broadening

- medication verification

- revision and reinforcement

Medicine interns are especially likely to bounce between vague problems, detailed medication decisions, and constant clarification needs, so the stack genuinely helps.

Surgical intern

The surgical intern usually needs a narrower setup:

- very strong documentation/help-with-admin support

- rapid evidence or clarification support for common perioperative issues

- medication verification

- much lighter DDx use than a medicine intern might need

For this persona, speed and workflow fit matter more than maximal informational breadth.

IMG entering a new system

This is one of the highest-value personas for a stack approach.

IMGs often need:

- explanation, not just answers

- support with local workflow expectations

- a clarification layer that reduces system friction

- reinforcement that helps medicine make sense in context

- a very reliable prescribing-check layer

That is why a stack often works better than a single brand decision. The problem is not only clinical uncertainty. It is system acclimatisation.

Outpatient-heavy resident

This resident often benefits most from:

- documentation support

- quick evidence lookup

- medication verification

- lighter revision support for common ambulatory patterns

In other words, the stack becomes narrower and more workflow-centred.

Common mistakes new residents make with AI tools

Mistake 1: judging tools only on fluency

A smooth answer is not the same thing as a usable or trustworthy answer.

Mistake 2: expecting one tool to solve every problem

Documentation, evidence lookup, DDx broadening, prescribing, and learning are different jobs. One product may touch more than one category, but very few do all of them equally well.

Mistake 3: using a general AI layer as a medication source of truth

This is where residents should be especially conservative. Verification matters.

Mistake 4: building a maximalist stack they never actually use

The best stack is low-friction. If it is too complicated to open when you are tired, it is too complicated.

Mistake 5: confusing faster answers with better learning

Residents still need retention. Easy access to information does not make spaced recall less important.

Where iatroX fits

The strongest framing here is disciplined rather than grandiose.

iatroX does not need to be presented as a rival to every documentation, evidence, or drug-reference tool. That would make the article weaker.

A much better framing is:

Use iatroX as the layer that connects learning, clinical reasoning, and practical preparation.

If a documentation tool helps you record, and an evidence tool helps you search, iatroX fits best as the layer that helps you understand, reinforce, and think more clearly through what you are seeing.

That makes it especially useful for residents who want:

- structured explanation

- practical clinician education

- movement from isolated questions to clearer reasoning

- a bridge between question-bank habits and live clinical thinking

The most natural internal routes are:

- How iatroX works

- Academy

- Clinical Q&A Library

- What AI tool should a doctor actually use?

- AMBOSS is no longer just a q-bank: what that means for doctors in 2026

FAQs

What is the best AI tool for new residents?

Usually not one tool. The better answer is a small stack with clear roles: documentation, evidence lookup, differential broadening, medication verification, and learning.

Do residents need a DDx tool if they already have an evidence tool?

Sometimes yes. Evidence tools are strongest when you already roughly know what the problem is. DDx tools matter more when the case is still fuzzy and premature closure is the risk.

Should residents use AI for prescribing?

AI can help orient, but prescribing decisions should still be verified against dedicated medication references, local policy, and clinical supervision.

Is a documentation tool enough on its own?

No. Documentation tools remove admin burden. They do not replace evidence lookup, differential thinking, medication verification, or retention work.

Where does iatroX fit if I already use AMBOSS or OpenEvidence?

iatroX fits best as the explanation and reinforcement layer: the part of the stack that helps connect quick clarification to deeper understanding and practical clinician learning.

Bottom line

New residents do not need the “best AI tool” in the abstract.

They need a sensible stack.

Documentation, evidence lookup, differential broadening, prescribing, and retention are different jobs. The smartest setup is not the biggest one. It is the one where each layer has a clear purpose and actually gets used when you are tired, interrupted, and under time pressure.

That means:

- a documentation layer if note burden is the problem

- an evidence layer if fast source-aware checking is the problem

- a DDx layer if premature closure is the risk

- a dedicated medication layer for safety

- a learning layer so practice does not slowly disconnect from understanding

And that is exactly where iatroX becomes useful: not as a dependency, but as the layer that connects learning, clinical reasoning, and practical preparation.